OpenAI GPT-5.4-Cyber: What It Is and Who Can Use It (2026)

OpenAI just launched GPT-5.4-Cyber, a cybersecurity-focused AI model with fewer restrictions for security professionals. Here's what it does, how it compares to Claude Mythos, and how to get access.

OpenAI launched GPT-5.4-Cyber today — a new AI model built specifically for cybersecurity defense. It's a fine-tuned version of GPT-5.4 with deliberately fewer restrictions for legitimate security work, and it's OpenAI's direct answer to Anthropic's Claude Mythos, which caused a stir in the security community just one week ago.

This isn't a general-purpose model available to everyone. Access is limited, tiered, and gated behind an identity verification program. But for security professionals, it represents a meaningful shift in how AI companies are thinking about the cybersecurity space.

Here's what GPT-5.4-Cyber actually is, what it can do, and where it sits relative to Mythos.

What Is GPT-5.4-Cyber?

GPT-5.4-Cyber is a variant of GPT-5.4 — OpenAI's flagship model released in March 2026 — fine-tuned specifically to support defensive cybersecurity use cases. OpenAI describes it as "cyber-permissive," meaning it will engage with security-sensitive topics and tasks that the base model would typically refuse.

From OpenAI's own announcement:

"In preparation for increasingly more capable models from OpenAI over the next few months, we are fine-tuning our models specifically to enable defensive cybersecurity use cases, starting today with a variant of GPT‑5.4 trained to be cyber-permissive: GPT‑5.4‑Cyber."

The phrase "preparation for more capable models" is notable — OpenAI is signaling this is a long-term strategy, not a one-off release. With Spud (GPT-6) still pending, the cybersecurity track is already being established ahead of that launch.

What Can GPT-5.4-Cyber Do?

GPT-5.4-Cyber is designed to assist with the kind of security work that general AI models typically refuse to engage with, including:

- Malware analysis — examining malicious code to understand how it works and how to defend against it

- Binary reverse engineering — analyzing compiled software without access to source code, a core skill in vulnerability research

- Vulnerability detection — scanning codebases or systems to identify exploitable weaknesses before attackers do

- Threat detection and response — analyzing logs, network traffic, and system behavior for indicators of compromise

- Security research workflows — supporting the end-to-end process of identifying, reporting, and mitigating security flaws

The key distinction from the base GPT-5.4 model: it won't decline security-related prompts when you're operating as a verified defender. Standard AI models refuse much of this work because the same techniques used in defense can be used in offense — GPT-5.4-Cyber threads this needle through access control rather than blanket refusals.

Who Can Access It?

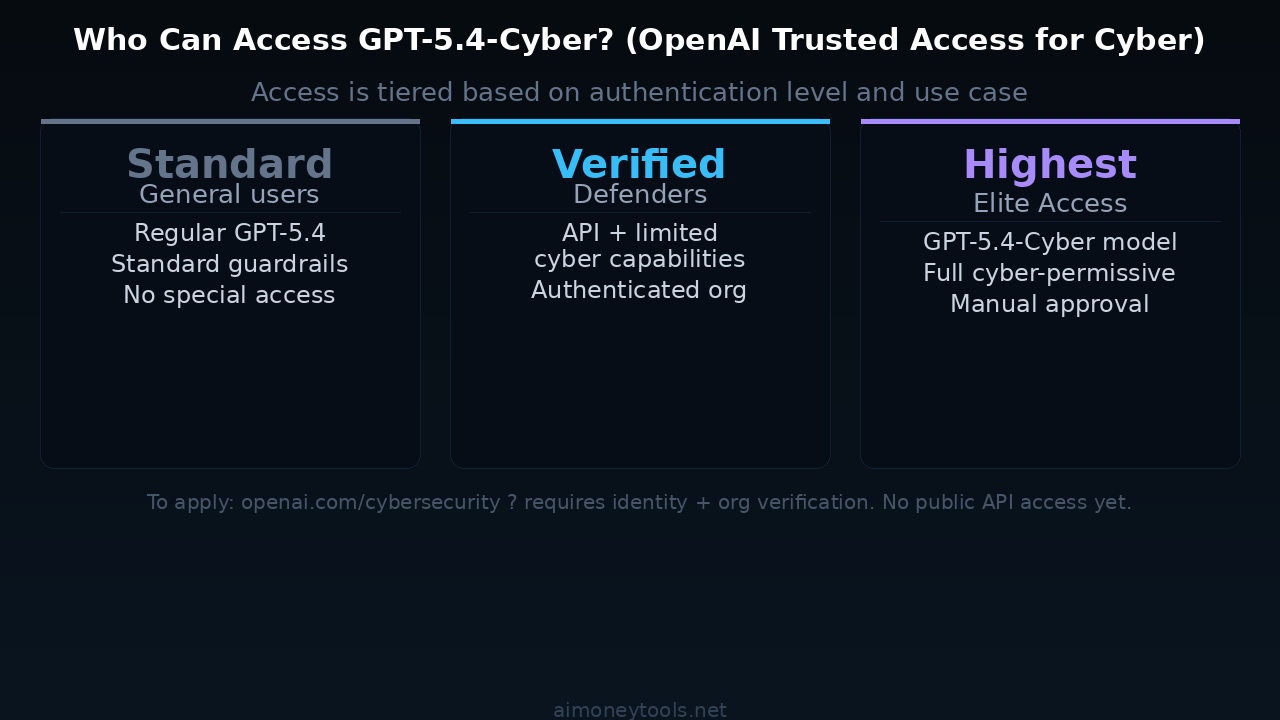

Access is not open. OpenAI has built a tiered program called Trusted Access for Cyber, and GPT-5.4-Cyber sits at the top tier.

The three tiers work roughly like this:

Standard tier — Regular ChatGPT and API users. No special access. The base GPT-5.4 model with normal guardrails.

Verified Defender tier — Authenticated security professionals with an organization affiliation. Gets limited cyber-focused API capabilities beyond the standard model.

Highest tier (GPT-5.4-Cyber) — Manually approved access to the full GPT-5.4-Cyber model. Requires identity verification, organization verification, and demonstrated legitimate use case. Fully cyber-permissive within this context.

To apply, OpenAI points to their cybersecurity program page. The approval process involves authenticating yourself and your organization as a legitimate cybersecurity defender — not just signing up.

This model is not available to regular ChatGPT subscribers, developers using the standard API, or the general public.

How Does It Compare to Claude Mythos?

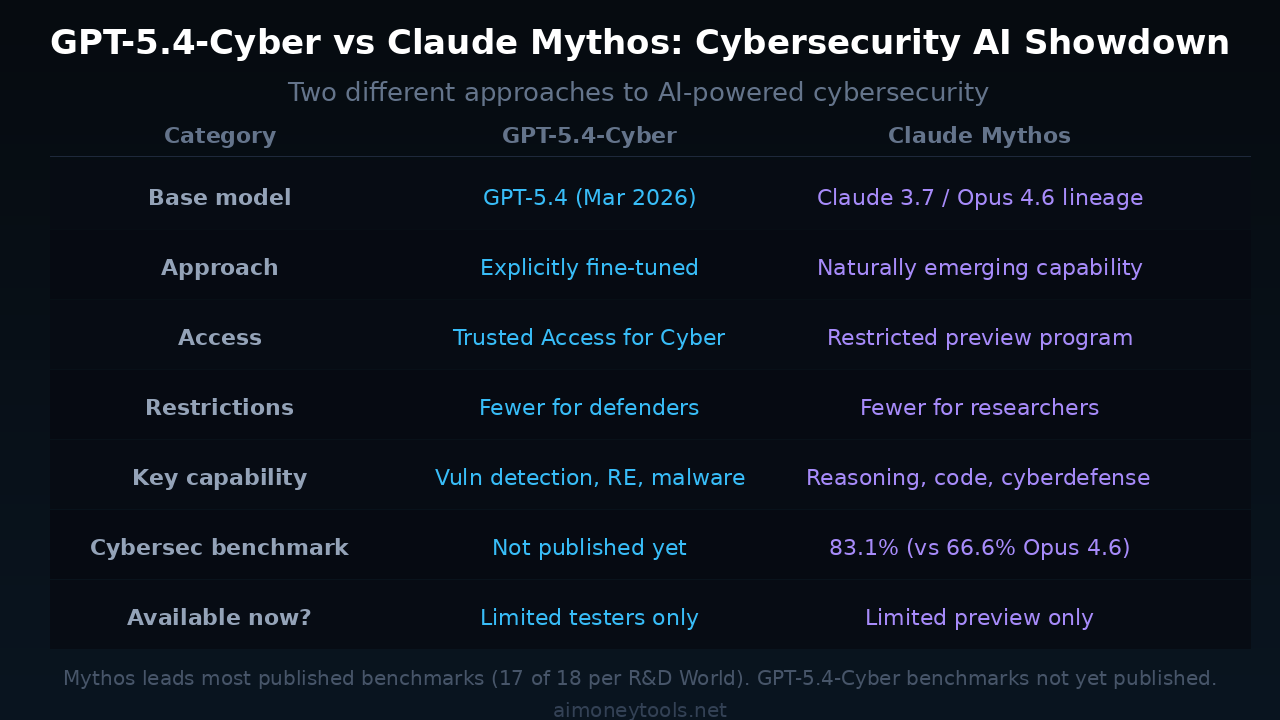

Both models entered the cybersecurity AI space within a week of each other, but they took very different approaches.

Approach:

- GPT-5.4-Cyber was explicitly fine-tuned for cybersecurity — it started as a general model and was retrained to be cyber-permissive

- Claude Mythos's security capabilities emerged naturally from training on a massive, diverse dataset — Anthropic did not specifically train it to be a security model

The difference matters in practice. A fine-tuned model tends to be more predictable and scoped within its domain. A general model with emergent security reasoning may generalize better to novel attack patterns — but is also harder to constrain.

Benchmarks: Claude Mythos leads most published metrics. On Anthropic's cybersecurity benchmark, Mythos Preview scored 83.1% versus 66.6% for Claude Opus 4.6. Mythos leads 17 of 18 benchmarks Anthropic has published comparisons for.

GPT-5.4-Cyber benchmarks have not been published yet — OpenAI released the model before releasing performance data. That's worth watching.

Access model: Both are restricted to vetted users. Neither is available to the public or through standard API plans. Mythos is in a "restricted preview" — GPT-5.4-Cyber is gated behind the Trusted Access program.

The race framing: The timing is not a coincidence. OpenAI released GPT-5.4-Cyber exactly 7 days after Anthropic announced the Claude Mythos Preview, which included significant coverage of its cybersecurity capabilities. This is an explicit competitive move — and OpenAI telegraphed it by citing Mythos in the launch context.

Why Is This Happening Now?

The AI cybersecurity race is heating up for a few reasons:

Enterprise demand: Security teams represent some of the highest-value enterprise customers for AI companies. A model that can meaningfully reduce analyst workload on vulnerability triage and threat detection commands serious budget.

Regulatory pressure: Governments worldwide are beginning to ask AI companies to demonstrate responsible deployment in high-risk domains. Building verified-access programs is part of demonstrating that to regulators.

The compute moat: More capable models have higher "uplift potential" — they can assist with attacks as well as defense. By building access control into the product layer, OpenAI is trying to get ahead of the argument that capable models are too dangerous to deploy.

Anthropic's lead: Mythos's performance on cybersecurity benchmarks got significant attention. OpenAI can't afford to cede this market to a competitor — especially with Spud still unreleased.

What This Means for Beginners

If you're exploring AI and not in cybersecurity, this model is not for you right now — and that's by design.

But the broader pattern is worth understanding: AI companies are increasingly building domain-specific, access-controlled variants of their foundation models. The same GPT-5.4 that powers general use cases is being adapted into specialized tools for specific professional contexts. Security is first. Healthcare, legal, and finance are likely next.

If you want to use today's best AI tools without a verification process, the most capable open-access options remain the standard Claude API and GPT-5.4 base models. For local alternatives that run entirely on your own hardware with no restrictions, see our LM Studio setup guide or Gemma 4 guide.

For GPU power that can actually run capable models locally, Ampere offers cloud GPU access worth knowing about if you're scaling your own AI setup.

What Happens Next

A few things to watch:

- GPT-5.4-Cyber benchmark data — OpenAI hasn't published performance numbers. When they do, the Mythos comparison becomes more concrete.

- Spud (GPT-6) launch — OpenAI described this as "preparation for increasingly more capable models." Spud's pre-training finished March 24; the release window extends through this week. A GPT-6-Cyber variant seems likely once Spud ships.

- Access expansion — The Trusted Access program will likely broaden as more organizations apply and OpenAI builds confidence in the vetting process.

- Mythos public availability — Anthropic's Mythos Preview is similarly restricted. As both companies get comfortable with how the models are being used, general availability is the expected trajectory.

We covered the Claude Mythos launch in detail when it dropped — read our Mythos explainer here for full benchmark comparisons across all frontier models. And if you want to stay ahead of the OpenAI Spud launch, our GPT-6 Spud preview article has everything confirmed so far.

Key Takeaways

- GPT-5.4-Cyber is a cybersecurity-specific fine-tune of GPT-5.4, released April 14, 2026

- Access requires verification through OpenAI's Trusted Access for Cyber program — not open to the public

- It can do malware analysis, binary reverse engineering, vulnerability detection, and security research workflows

- Claude Mythos leads published benchmarks (83.1% on cyberdefense vs Mythos's baseline); GPT-5.4-Cyber benchmarks not yet released

- This is OpenAI's direct response to Anthropic's Claude Mythos, 7 days after Mythos's launch

- Both companies are building tiered, access-controlled security AI — this is a new market category forming in real time

FAQ

Is GPT-5.4-Cyber available to the public?

No. It's restricted to verified participants in OpenAI's Trusted Access for Cyber program. Standard ChatGPT and API users get the regular GPT-5.4 model.

How is GPT-5.4-Cyber different from regular GPT-5.4?

GPT-5.4-Cyber has been fine-tuned specifically for cybersecurity tasks and has reduced refusal behavior for legitimate security work. The base GPT-5.4 would decline many of the same requests.

How does GPT-5.4-Cyber compare to Claude Mythos?

Claude Mythos leads most published benchmarks. The key difference: Mythos's security capabilities emerged naturally; GPT-5.4-Cyber was explicitly trained for the domain. Both require verified access programs.

Can I use AI for cybersecurity without verified access?

Standard GPT-5.4 and Claude's general API can assist with some security topics, but won't engage with dual-use techniques like malware analysis or reverse engineering. For no-restriction local models, tools like LM Studio running open-weight models on your own hardware are an option.

What is OpenAI's Trusted Access for Cyber program?

It's a tiered access program requiring identity and organization verification. The highest tier grants access to GPT-5.4-Cyber. Apply at openai.com/cybersecurity.

Is Spud (GPT-6) also getting a Cyber variant?

Not confirmed, but OpenAI's announcement strongly implied it — they described GPT-5.4-Cyber as "preparation for increasingly more capable models." A GPT-6-Cyber variant seems likely post-Spud launch.

Alex the Engineer

•Founder & AI ArchitectSenior software engineer turned AI Agency owner. I build massive, scalable AI workflows and share the exact blueprints, financial models, and code I use to generate automated revenue in 2026.

Related Articles

Claude Opus 4.8 Is Out: Everything That Changed (May 2026)

Anthropic just released Claude Opus 4.8 — the only AI model to complete every Super-Agent benchmark case end-to-end, with 2.5x speed, cheaper fast mode, and new effort controls. Here's what changed and who should care.

InVideo AI Review 2026: Is It Worth It for Beginners?

Full InVideo AI review: pricing, plans, Agent One, what's good, what's not, and whether it's the right AI video tool for beginners building content in 2026.

YouTube Now Auto-Labels AI Videos: What Every Creator Needs to Know (2026)

YouTube is automatically detecting and labeling AI-generated videos starting May 2026. Here's exactly what changed, what it means for creators using AI tools, and what you need to do right now.