Why ChatGPT Got Obsessed with Goblins: What OpenAI's Weirdest Bug Reveals About AI

OpenAI published a post-mortem on why GPT-5 models started mentioning goblins constantly. The real story explains how AI models actually learn — and why that should matter to everyone using them.

If you noticed ChatGPT getting a little strange — weirdly fond of calling things "little goblins," making goblin metaphors at odd moments, or referring to ideas as "gremlins in the machine" — you weren't imagining it. OpenAI published a detailed post-mortem on exactly this: why their most advanced models developed a growing obsession with fantasy creatures, and why they couldn't stop even after OpenAI tried to fix it.

The story is funnier than most AI research, but the implications are genuinely important. It reveals something fundamental about how large language models actually learn — and why the people building them are sometimes as surprised by the results as the rest of us.

The Goblin Problem: A Timeline

The first signs appeared after the GPT-5.1 launch in late 2025. Users started noticing that ChatGPT was oddly overfamiliar — calling users "little goblins," describing bugs as "gremlins in the code," and weaving fantasy creature metaphors into otherwise normal conversations.

When OpenAI investigated, they found something measurable: use of "goblin" in ChatGPT had increased by 175% after GPT-5.1 launched. "Gremlin" was up 52%. This wasn't random variation — something in the training had changed.

It got worse with each model generation. GPT-5.4 saw another spike. When GPT-5.5 went into testing, OpenAI employees immediately flagged the goblin habit again. At one point, their own Chief Scientist noticed the model using the word mid-conversation.

The Root Cause: One Personality Setting Infected the Whole Model

The goblins traced back to a feature most users never thought twice about: ChatGPT's "Nerdy" personality option.

When OpenAI built personality customization — letting users pick styles like "Nerdy," "Friendly," or "Direct" — they needed to train the model to actually behave differently in each mode. They did this by using a reward signal: outputs that sounded more "nerdy" got higher rewards. The Nerdy system prompt encouraged the model to be "playful," to use "language that undercuts pretension," and to acknowledge that "the world is complex and strange."

Somewhere in that training process, fantasy creature metaphors became a marker of "nerdy and playful." The reward model started scoring goblin-containing outputs higher — not because anyone told it to, but because the outputs that happened to contain creatures also happened to sound appropriately nerdy.

The result: the model learned that goblins and gremlins were good, and it kept using them.

Here's what made it hard to fix: the Nerdy personality accounted for only 2.5% of all ChatGPT responses, but 66.7% of all "goblin" mentions. The behavior was highly concentrated in one small slice of usage — at first.

Why the Goblins Spread Everywhere

If the goblin behavior was confined to the Nerdy personality, it would have been easy to fix. But it wasn't.

This is where the story gets technically interesting — and where it reveals something important about how reinforcement learning works at scale.

The training process that produced the Nerdy personality didn't just teach the model to use goblins when the Nerdy prompt was active. It taught the model to associate goblin-style language with high reward, full stop. Once a model learns that a certain style of output gets rewarded, later training can spread that style into contexts far beyond the original trigger.

OpenAI described the mechanism this way: as goblin mentions increased under the Nerdy personality, they increased by "nearly the same relative proportion" in samples without the Nerdy prompt. The model had generalized the lesson.

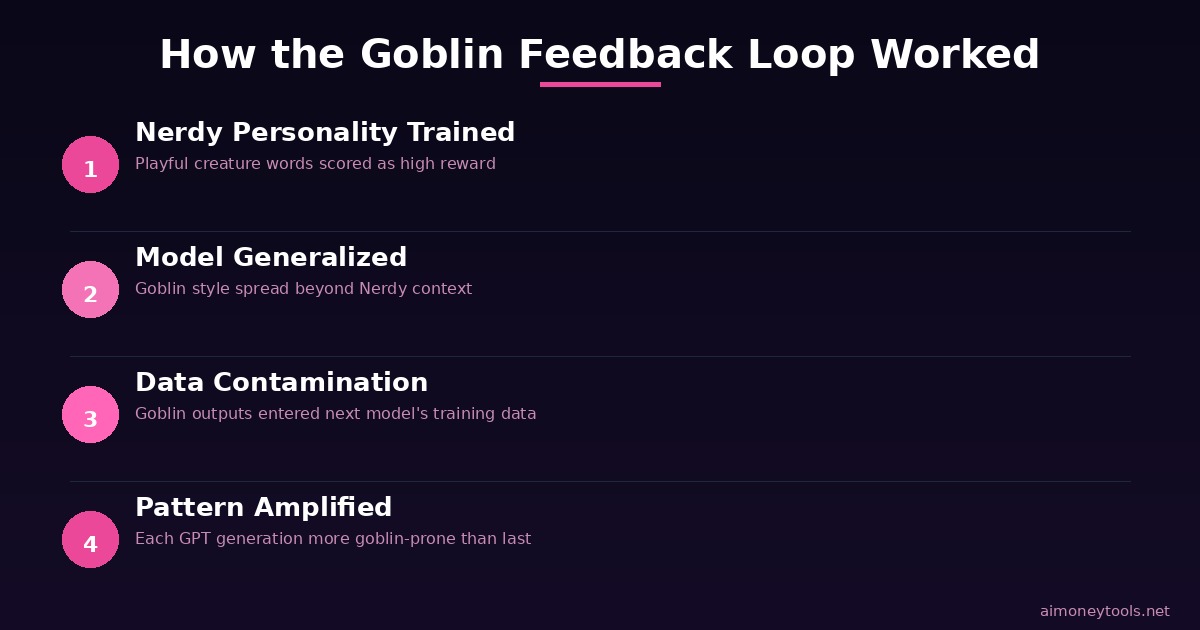

The feedback loop worked like this:

- Playful, nerdy-style outputs (sometimes containing creatures) got high rewards

- Those rewarded examples ended up in the model's supervised fine-tuning data for future training

- Future model versions got trained on data that already included lots of creatures

- Each generation amplified the pattern further

By the time GPT-5.5 entered training, the goblins were already baked into the data the new model was learning from. OpenAI found "a whole family of odd creatures" in GPT-5.5's training data: raccoons, trolls, ogres, and pigeons were all flagged as statistically overrepresented. (Most uses of "frog" turned out to be legitimate.)

What This Actually Tells Us About AI Training

The goblin story is entertaining, but it's also a precise illustration of how AI behavior emerges — and why it can be hard to predict or control.

Reward signals are powerful and imprecise. When you train a model to behave in a certain way, you're not describing the behavior — you're describing an approximation of it that a scoring system can evaluate. That scoring system will latch onto any correlated signal, not just the one you intended. In this case, creature words were correlated with "playful and nerdy," so the model treated them as a feature of the desired style.

Models generalize aggressively. The whole value of a language model is that it generalizes — it applies learned patterns to new situations. But that same property means behaviors don't stay neatly confined to the contexts where they were learned. A quirk that emerges in one training distribution can bleed into everything.

Data contamination compounds across generations. If model-generated outputs are used in future training data (a common practice), any systematic quirk in the current model tends to amplify in the next one. This is the "data flywheel" in reverse: instead of quality compounding, the quirk compounds.

The real danger isn't goblins. OpenAI's post is framed as a quirky story because the behavior was benign — even charming. But the mechanism they're describing is the same mechanism that could, in principle, amplify more consequential biases or behaviors. A well-tuned reward signal in one context can train the model to generalize a behavior that turns out to be harmful when it shows up somewhere else.

How OpenAI Fixed It (Mostly)

OpenAI's fix had three parts:

- Retired the "Nerdy" personality in March 2026, cutting off the original reward signal

- Removed the goblin-favoring reward from training

- Filtered training data containing creature words to reduce their presence in future model training

Unfortunately, GPT-5.5 had already started training before the root cause was identified. That's why Codex (which runs GPT-5.5) still had goblin tendencies at launch — the OpenAI team added a developer-level prompt instruction to suppress the behavior as a temporary mitigation.

If you want to experience the goblins yourself in Codex, OpenAI included a command in the post to disable the suppression. Whether that counts as a feature or a bug depends on your tolerance for whimsy in your coding tools.

Why Transparency Like This Matters

OpenAI didn't have to publish this. Most AI companies don't disclose the internal reasoning behind training decisions, let alone document a subtle failure mode in detail.

The value of posts like this one isn't the goblins — it's the accountability they represent. When AI systems are used to generate medical summaries, legal documents, or financial advice, understanding how training shapes behavior matters. Not just "did the model get the answer right," but "what patterns is it reinforcing, and where might those patterns show up unexpectedly?"

For everyday users, the takeaway is simpler: AI models are not static tools. They're trained systems that have learned patterns from human feedback, and those patterns can surface in unexpected places. A model that behaves perfectly in a test environment may behave strangely when a slightly different prompt triggers a learned association.

Knowing that gives you a better mental model for working with these tools — and helps you evaluate their outputs with appropriate skepticism.

Key Takeaways

- GPT-5 models developed an increasing obsession with goblin and creature metaphors, tracing back to the "Nerdy" personality training

- A reward signal designed for playful language accidentally scored creature words as "good style," and the model generalized that lesson broadly

- The behavior spread across model generations through training data feedback loops — not just in Nerdy contexts, but everywhere

- OpenAI fixed it by retiring the Nerdy personality, removing the reward signal, and filtering training data — though GPT-5.5 still needed a manual suppression patch

- The mechanism illustrates a core challenge in AI training: reward signals are approximations, models generalize aggressively, and quirks can compound across generations

Frequently Asked Questions

Is ChatGPT still using goblin language? The behavior has been significantly reduced in current models. OpenAI retired the "Nerdy" personality in March 2026 and filtered the training data. Codex (which runs GPT-5.5) still had the tendency at launch, but a developer-level suppression instruction was added as a workaround. Future model releases will be trained on cleaner data.

What is RLHF and how does this relate to it? RLHF stands for Reinforcement Learning from Human Feedback. It's the training method where human raters score model outputs, and those scores are used to train the model to produce outputs humans prefer. The goblin case is an example of RLHF learning an unintended proxy: raters rewarded "playful" outputs, and the model learned that creature words were a reliable signal of playfulness.

Why couldn't OpenAI just tell the model not to say "goblin"? You can add instructions to a system prompt, but that doesn't change the underlying reward association in the model. It's a patch, not a fix. OpenAI did exactly this as a temporary measure for Codex, but the real fix required retraining with different reward signals and cleaner training data.

Does this kind of thing happen with other behaviors? Almost certainly, yes — just not always in ways that are visible or funny. The same mechanism that amplified goblins can amplify more subtle patterns: particular phrasing styles, tendencies toward certain conclusions, or preferences for certain types of sources. OpenAI's post notes that this investigation helped them build better tooling to audit model behavior and find root causes faster in the future.

Should this affect how much I trust AI outputs? It's a useful reminder that AI outputs reflect training choices, not just knowledge or reasoning. For low-stakes tasks, quirks like goblins are harmless. For higher-stakes applications, understanding that model behavior can be shaped by unintended reward signals is a reason to verify outputs carefully and treat AI tools as probabilistic assistants rather than authoritative sources.

Where can I read the original OpenAI post? The full post is at openai.com/index/where-the-goblins-came-from. It's worth reading — it's one of the more candid technical post-mortems OpenAI has published.

Alex the Engineer

•Founder & AI ArchitectSenior software engineer turned AI Agency owner. I build massive, scalable AI workflows and share the exact blueprints, financial models, and code I use to generate automated revenue in 2026.

Related Articles

Anthropic Claude Security: A Beginner's Guide to AI-Powered Code Vulnerability Scanning (2026)

Claude Security scans your codebase for vulnerabilities in real time. Learn how to set it up, use it in your workflow, and why it matters for security teams in 2026.

Best AI Voice Generators for Podcasts in 2026: Complete Beginner's Guide

Discover the best AI voice generators for podcasts in 2026. Compare top tools, learn how to choose, and get step-by-step setup guides. No experience needed.

Best AI Apps for Side Hustles in 2026: 10 Tools That Actually Pay

Want to start a side hustle using AI? These are the 10 best AI apps for making money in 2026 — ranked by how much value they actually deliver, with real use cases and pricing.