How to Use Groq API: Get Ultra-Fast AI Inference for Free (2026 Guide)

Learn how to set up and use the Groq API in Python. Step-by-step tutorial covering free tier, authentication, and your first API call.

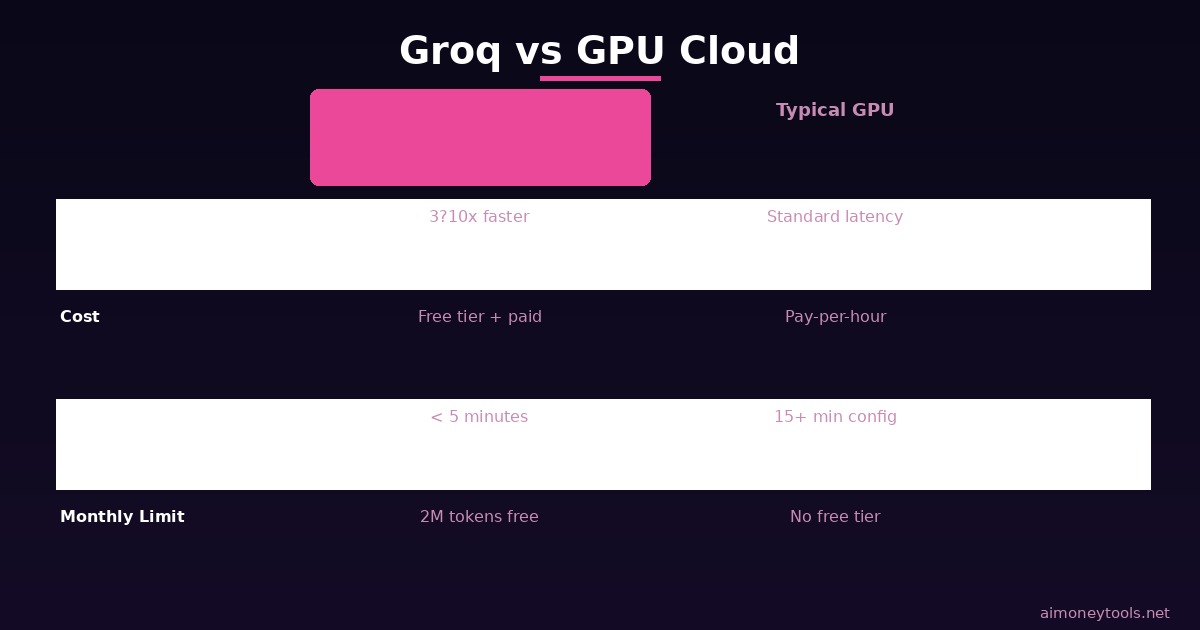

Groq's LPU (Language Processing Unit) is the fastest AI inference engine available in 2026. It's 3–10x faster than GPU-based alternatives, with a free tier that gives you 2 million tokens per day.

If you're building AI applications and you're tired of waiting for responses or paying exorbitant API costs, Groq is a game-changer.

This guide walks you through setting up the Groq API, making your first request, and building a simple AI chatbot — all in under 10 minutes.

What Is Groq? (And Why It's So Fast)

Groq's LPU is a specialized chip designed for AI inference. Unlike GPUs (which are general-purpose), LPUs are built for one job: running language models as fast as possible.

The result:

- Sub-100ms latency — responses come back faster than you can blink

- Sequential token generation — Groq processes tokens one after another without batching, which means lower latency for real-time apps

- Free tier — 2 million tokens per month at no cost

Groq powers models like:

- Mixtral 8x7B (open-source, multimodal)

- LLaMA 3.1 (meta's model, 405B parameter version available)

- Whisper (speech-to-text)

- Gemma (Google's lightweight model)

You can also use proprietary models like Claude or GPT-4 through Groq's API layer.

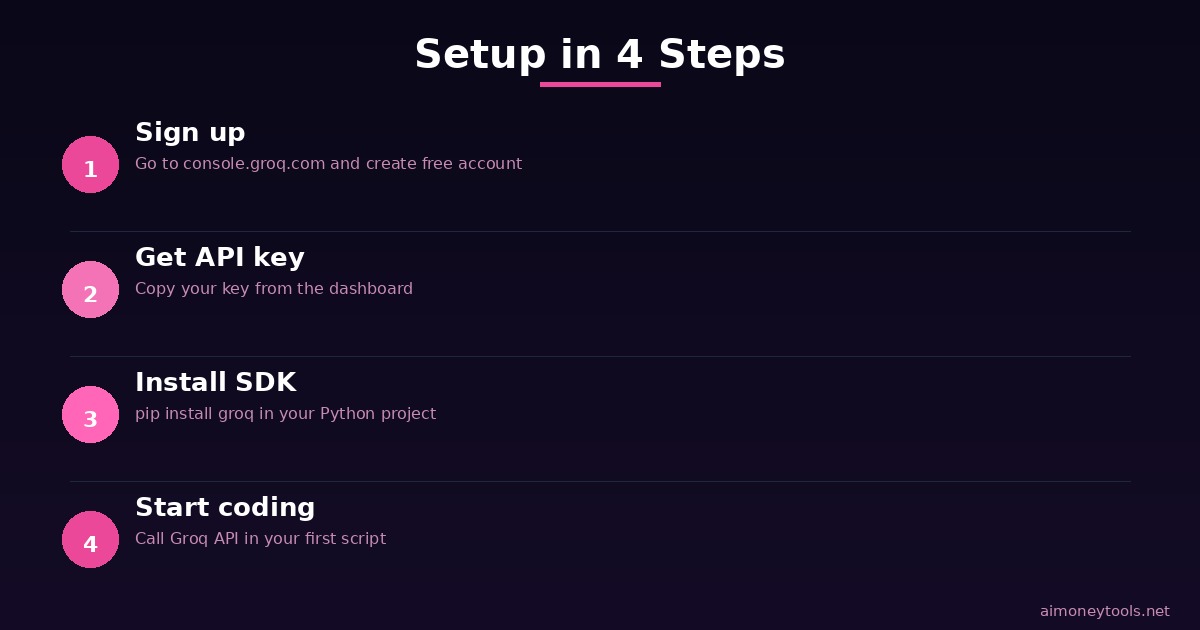

Step 1: Sign Up for Groq

Head to console.groq.com and create a free account.

You'll need:

- Email address

- Password

- Optional: GitHub account to sign up faster

Once you log in, you'll see the Groq Console. Go to API Keys in the left sidebar.

Click "Create API Key" and copy it somewhere safe. You'll use this in your Python code.

Free tier includes:

- 2,000,000 tokens per month

- Unlimited API calls

- All Groq-hosted models available

If you exceed the free tier, you pay $0.05 per million tokens (extremely competitive).

Step 2: Install the Groq Python SDK

Open your terminal and run:

pip install groq

That's it. The SDK includes everything you need to authenticate and make API calls.

Step 3: Make Your First API Call

Create a new Python file called groq_test.py:

from groq import Groq

# Initialize the client (reads GROQ_API_KEY from environment)

client = Groq()

# Simple completion request

response = client.chat.completions.create(

model="mixtral-8x7b-32768", # Fast, open-source model

messages=[

{"role": "user", "content": "What is AI?"}

],

max_tokens=150

)

print(response.choices[0].message.content)

Before running: Export your API key:

export GROQ_API_KEY="gsk_YOUR_KEY_HERE"

python groq_test.py

You should see a response in under 500ms.

Step 4: Build a Simple Chatbot

Here's a multi-turn chatbot that remembers conversation history:

from groq import Groq

client = Groq()

def chat_with_groq():

"""Simple conversational AI using Groq."""

messages = []

print("Groq Chatbot (type 'quit' to exit)")

print("-" * 40)

while True:

user_input = input("You: ")

if user_input.lower() == "quit":

break

# Add user message to history

messages.append({"role": "user", "content": user_input})

# Get response from Groq

response = client.chat.completions.create(

model="mixtral-8x7b-32768",

messages=messages,

max_tokens=200,

temperature=0.7

)

assistant_message = response.choices[0].message.content

# Add assistant response to history

messages.append({"role": "assistant", "content": assistant_message})

print(f"Bot: {assistant_message}\n")

# Run the chatbot

chat_with_groq()

This chatbot maintains conversation history, so it understands context across multiple turns.

Model Options on Groq

Here are the fastest available models in 2026:

| Model | Use Case | Speed |

|---|---|---|

| Mixtral 8x7B | General purpose, coding, reasoning | ~150 tok/sec |

| LLaMA 3.1 405B | Complex reasoning, large context | ~120 tok/sec |

| Gemma 2 9B | Lightweight, efficient | ~200 tok/sec |

| Whisper Large | Speech-to-text | Real-time |

All of them are 3–10x faster than running the same model on a GPU.

Common Use Cases

Real-time customer support: Sub-100ms responses mean you can power live chatbots without lag.

Content generation at scale: 2 million tokens per month = ~400,000 words of AI-generated content for free.

Voice AI: Combine Groq's Whisper with Mixtral for real-time transcription + AI responses.

Prototyping: Build and test AI features before committing to expensive infrastructure.

Limitations & When to Use Competitors

Groq is not the right choice if:

- You need GPT-4 or Claude exclusively (Groq doesn't host proprietary models)

- You have very large batch jobs (Groq is optimized for latency, not throughput)

- You need enterprise features like audit logs or HIPAA compliance (yet)

When to use Groq:

- Real-time inference (chatbots, voice, streaming)

- Cost-sensitive applications (free tier is generous)

- Open-source model preference

- Prototyping before scaling to other APIs

For comparison:

- OpenAI GPT-4: Better reasoning, proprietary, slower (1–3 sec latency)

- Anthropic Claude: Longer context, slower, enterprise-focused

- Groq: Fastest, free tier, open-source models

FAQ

Q: Will I get charged if I stay under 2M tokens? A: No. The free tier is truly free — no credit card required.

Q: How do I use GPT-4 on Groq? A: You can't. Groq only hosts open-source and licensed models (Mixtral, LLaMA, Gemma, Whisper). For proprietary models, use OpenAI's API directly.

Q: What's the difference between Mixtral 8x7B and LLaMA 3? A: LLaMA 3 is larger (more capable, slower). Mixtral is smaller, faster, and better for real-time apps. Test both with your use case.

Q: Can I fine-tune models on Groq? A: Not yet. Groq is inference-only. For fine-tuning, use other providers.

Q: Is Groq open source? A: The hardware is proprietary, but Groq supports open-source model inference (LLaMA, Gemma, Mixtral). Models themselves are open.

Key Takeaways

- Groq LPU is 3–10x faster than typical GPU inference for real-time AI applications

- Free tier: 2 million tokens/month with no credit card

- Setup takes < 5 minutes: Sign up, get API key, install SDK, run code

- Open-source models: Use Mixtral, LLaMA, Gemma, or Whisper on Groq's infrastructure

- Best for: Real-time chatbots, voice AI, content generation, and prototyping

- Not ideal for: Proprietary models (GPT-4, Claude) or complex enterprise workflows

Ready to get started? Visit console.groq.com and create your free API key now. Your first request will come back in milliseconds — you'll feel the difference immediately.

Alex the Engineer

•Founder & AI ArchitectSenior software engineer turned AI Agency owner. I build massive, scalable AI workflows and share the exact blueprints, financial models, and code I use to generate automated revenue in 2026.

Related Articles

Apple's New Siri AI at WWDC 2026: What It Means for Every iPhone User

Apple unveiled Siri AI at WWDC 2026 — powered by Google Gemini, with on-screen awareness, image generation, and full conversation memory. Here is what changes for everyday iPhone users.

How to Install Python for AI in 2026: The Complete Beginner's Guide

Step-by-step guide to install Python 3.12 for AI on Windows, Mac, and Linux. Covers PATH setup, pip, virtual environments, and the core AI libraries every beginner needs.

Anthropic Is Going Public: What the IPO Means for Claude Users in 2026

Anthropic just filed confidential IPO paperwork with the SEC. Here is what that actually means for everyday Claude users, whether prices will change, and what happens next.