Mistral Medium 3: The New AI Model That Costs 8x Less Than Claude (Launched Today)

Mistral just released Medium 3 — a model benchmarked at 90%+ of Claude Sonnet 4.6 performance at 8x lower cost. Here's what it means for you, and how to start using it today.

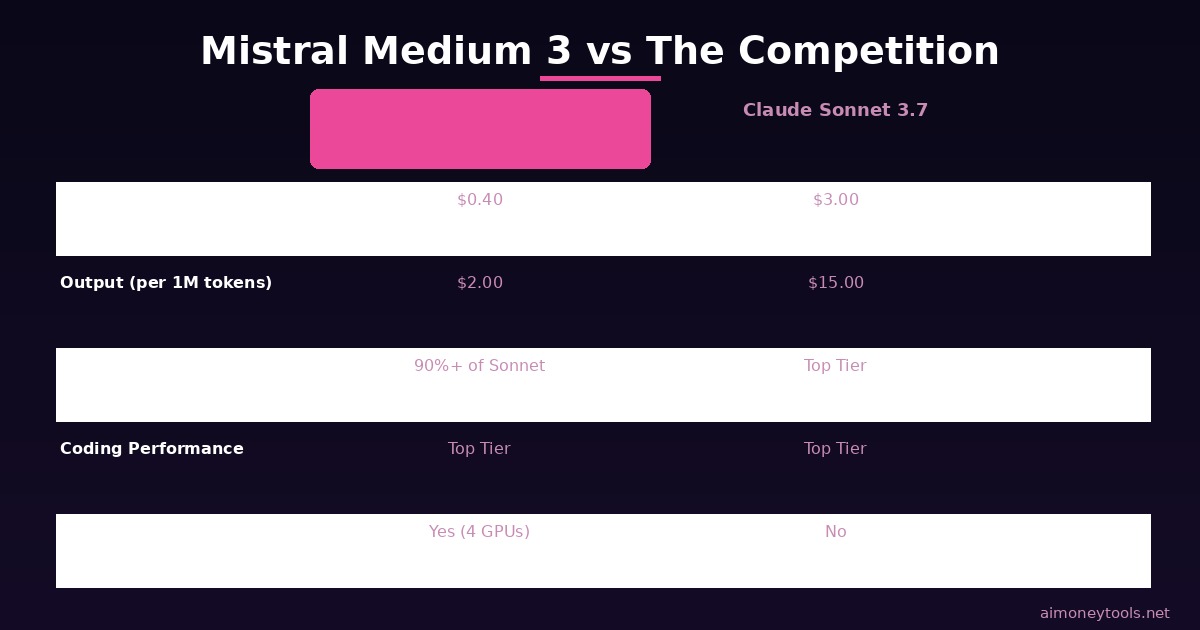

This morning, Mistral AI dropped something that has the AI world paying attention: Mistral Medium 3, a new language model that claims to deliver 90%+ of Claude Sonnet 3.7's performance at 8 times lower cost. (Note: Mistral's official benchmarks used Sonnet 3.7 as the comparison baseline — the current Anthropic lineup is Claude Sonnet 4.6, which raises the bar even further.)

If you've been following AI news, you know how rare that is. Cost reductions at this scale, without a noticeable quality drop, are exactly the kind of thing that shifts how developers, businesses, and individuals interact with AI.

Here's everything you need to know about Mistral Medium 3 — what it is, how it compares, and whether it's worth switching to.

What Is Mistral Medium 3?

Mistral Medium 3 is the newest AI model from Mistral AI, a French AI company that's been quietly one of the most competitive players in the language model space since 2023.

"Medium" in the name doesn't mean mediocre. Mistral's positioning is deliberate: Medium is the new Large. The company is making the argument that you no longer need an expensive, compute-hungry frontier model for most tasks — a well-optimized mid-tier model can match 90% of the performance at a small fraction of the price.

For most people using AI to write content, summarize documents, build automations, or run coding assistants, that 10% performance gap between Claude Sonnet 3.7 and Mistral Medium 3 probably doesn't matter. But the 8x price gap certainly does.

The Numbers: What Does 8x Cheaper Actually Mean?

Mistral Medium 3 is priced at:

- $0.40 per million input tokens

- $2.00 per million output tokens

For context, Claude Sonnet 3.7 runs approximately $3/M input and $15/M output on Anthropic's API.

That's not a small difference. If you're building a product that processes 10 million tokens per day — think a summarization tool, a customer service chatbot, or a document analyzer — the annual cost difference between Sonnet 3.7 and Mistral Medium 3 can run into the tens of thousands of dollars.

Even for individuals and small businesses doing lighter usage, this opens up experimentation that was previously cost-prohibitive.

How Does It Actually Perform?

According to Mistral's official benchmarks and third-party testing, Medium 3:

- Scores at or above 90% of Claude Sonnet 3.7 across major benchmarks

- Outperforms Llama 4 Maverick (Meta's open-source flagship)

- Beats DeepSeek V3 on pricing — both via API and for self-hosted deployments

- Leads in coding and STEM tasks — this is where it consistently punches above its weight class

- Strong in multimodal understanding — handles image analysis tasks effectively

The coding performance is the headline result. Mistral tested against Surge AI's human evaluation platform (a third-party benchmark that uses real developers scoring outputs) and Medium 3 came out ahead of several much larger, more expensive models.

For most beginners and business users, the categories that matter most are: writing quality, instruction following, and coding help. Medium 3 covers all three comfortably.

Where Can You Use It?

Mistral Medium 3 is available starting today via:

- Mistral La Plateforme — Mistral's own developer platform. Sign up for an API key and start making calls within minutes.

- Amazon SageMaker — If you're already in the AWS ecosystem, it's available as a managed endpoint.

Coming soon:

- IBM WatsonX

- NVIDIA NIM

- Azure AI Foundry

- Google Cloud Vertex AI

If you're a developer who wants to try the API today, go through La Plateforme. The process is similar to getting a Claude or OpenAI API key — create an account, verify email, add a payment method, generate a key.

Can You Run It Locally?

Yes — and this is where things get interesting. Mistral Medium 3 can be self-hosted on as few as 4 GPUs, making it more accessible to run on your own infrastructure than most models at this performance tier.

This is significant for:

- Businesses with data privacy requirements — healthcare, finance, legal

- Developers who want to avoid per-token costs entirely

- Anyone building on hardware they already own

If you want to run Mistral Medium 3 on your own cloud server, Ampere is worth checking out — ARM-based cloud instances optimized for AI inference workloads that can handle a 4-GPU self-hosted deployment cost-effectively.

Mistral Medium 3 vs. The Competition

Here's how Medium 3 stacks up against the models you've likely already heard of:

| Model | Input Cost (per 1M tokens) | Output Cost | Coding Rank | Self-Hostable |

|---|---|---|---|---|

| Mistral Medium 3 | $0.40 | $2.00 | Top tier | Yes (4 GPUs) |

| Claude Sonnet 4.6 (current) | ~$3.00 | ~$15.00 | Top tier | No |

| Claude Sonnet 3.7 (Mistral benchmark baseline) | ~$3.00 | ~$15.00 | Top tier | No |

| GPT-5.4 (OpenAI) | ~$2.50 | ~$10.00 | Top tier | No |

| DeepSeek V3 | ~$0.27 | ~$1.10 | Very strong | Yes (large) |

| Llama 4 Maverick | Free (open) | Free (open) | Strong | Yes |

The key tradeoff: DeepSeek V3 is cheaper on API, but Mistral beats it in most third-party coding evals and offers cleaner European-based infrastructure (a consideration for GDPR-sensitive use cases). Llama 4 Maverick is free to self-host but requires significantly more GPU memory than Mistral Medium 3.

For most people choosing between a paid API option, Medium 3 currently offers the best value-to-performance ratio in this tier.

What's Coming Next from Mistral?

Mistral dropped a teaser at the end of today's announcement: with Mistral Small (released March 2026) and Mistral Medium (today), they're clearly working on something "large" — and they hinted it could be open-sourced.

That's a notable signal. If Mistral releases a large open-source model in the coming weeks that can compete with GPT-5.4 and Claude Sonnet 4.6, the open-source AI landscape would shift dramatically. Their wording — "we're excited to 'open' up what's to come" — strongly implies an open weights release.

We'll be covering that launch the day it drops.

Should You Switch to Mistral Medium 3?

It depends what you're optimizing for.

Switch if:

- You're building something that processes high volumes of text (the cost savings are real)

- You primarily need strong coding assistance

- You want the option to self-host your AI for privacy or cost reasons

- You're currently on a Claude Sonnet or higher plan and paying more than you'd like

Stick with your current model if:

- You need the absolute best performance for complex reasoning (still Claude Opus 4.7 or GPT-5.4 territory)

- Your workflow is already optimized around a specific API and migration cost isn't worth it

- You specifically need features only Claude or GPT offer (like Claude's 200K token context or OpenAI's image generation)

For beginners exploring AI: Mistral Medium 3 is a solid starting point for API experimentation. The pricing is forgiving enough to run hundreds of tests without significant spend. If you're learning how to use APIs, build automations, or create AI tools — this is a genuinely cost-effective place to start.

How to Get Started With Mistral Medium 3

Getting access takes about 5 minutes:

- Go to console.mistral.ai and create an account

- Add a payment method (no large upfront commitment required)

- Generate an API key from the keys section

- Install the Mistral Python SDK:

pip install mistralai - Make your first call:

from mistralai import Mistral

client = Mistral(api_key="your-key-here")

response = client.chat.complete(

model="mistral-medium-latest",

messages=[{"role": "user", "content": "What can I build with AI in 2026?"}]

)

print(response.choices[0].message.content)

That's it. You're using Mistral Medium 3. The model name in the API is mistral-medium-latest.

Frequently Asked Questions

Is Mistral Medium 3 really as good as Claude Sonnet? Based on Mistral's official benchmarks (compared against Claude Sonnet 3.7), it reaches 90%+ of those scores. Anthropic's current lineup is Claude Sonnet 4.6, which is stronger — so treat this as a benchmark baseline, not a direct current-generation comparison. For coding and STEM tasks specifically, it's competitive. For creative writing, long-form reasoning, or long-form reasoning, or instruction-following, Claude still has an edge. For the majority of practical tasks — summarization, classification, code assistance, Q&A — the gap is negligible.

Is Mistral a safe company to use? Who owns it? Mistral AI is a French company founded in 2023, backed by major investors including Andreessen Horowitz, Lightspeed, and Nvidia. They're based in Paris and are Europe's most prominent AY model provider. Data processed on La Plateforme is subject to EU data handling standards, which is a meaningful advantage for European businesses.

Can I use Mistral Medium 3 for free? There's no permanent free tier, but Mistral typically offers small credit for neew accounts. At $0.40/M input tokens, even $5 of credit gets you a substantial amount of testing.

What's the difference between Mistral Medium 3 and Mistral Small 3.1? Small 3.1 (released March 2026) is a lighter, faster model optimized for high-throughput simple tasks. Medium 3 is the step up — stronger on complex reasoning, coding, and multimodal tasks, at a still-competitive price.

Can I use Mistral Medium 3 with standard tools like LangChain or LlamaIndex? Yes. Mistral's API is compatible with most standard AI development frameworks. LangChain, LlamaIndex, and many other tools have Mistral integrations. The process is similar to swapping in any other model provider.

What does "8x lower cost" mean in practice? If you currently spend $100/month on a Claude Sonnet API plan, equivalent usage on Mistral Medium 3 would cost roughly $12–15. For businesses at scale, this compounds fast.

Mistral Medium 3 is a meaningful release. The AI cost curve is continuing to compress, and models like this make serious AI-powered tools more accessible to smaller teams and independent builders.

Whether you switch immediately or keep it on your radar, it's worth understanding what just launched — because competitive pricing from Mistral puts pressure on every other model provider to respond.

Alex the Engineer

•Founder & AI ArchitectSenior software engineer turned AI Agency owner. I build massive, scalable AI workflows and share the exact blueprints, financial models, and code I use to generate automated revenue in 2026.

Related Articles

How to Make Money with AI in 2026: 7 Proven Methods for Beginners

Practical guide to making money with AI in 2026. Discover 7 verified income streams with real earning estimates — no coding required.

How to Use the Claude API: A Beginner's Tutorial (2026)

Never used an API before? This step-by-step guide shows you how to send your first message with the Claude API in under 10 minutes — no coding experience needed.